Understand the risks associated with Sub-Optimal Diagnostics.

What is a

“False Alarm”?

The explanation of a False Alarm

inopportunely is dependent upon your particular perspective. In general

context, it is the indecorous reporting of a failure to the operator of the

equipment or system. In tackling the world of possibilities that could

compromise the proper reporting of a failure, DSI has come over one specific

cause and the primary contributor to the experience of False Alarms, which is

the “Diagnostic-Induced” False Alarms, or more simply “Diagnostic False

Alarms”.

Diagnostic

False Alarms

The text book example of a “Diagnostic

False Alarm” is, for instance, when the diagnostic equipment (usually, on-board

BIT) misreports the functional status (test results) of whatever the sensor is developed

to be finding in its Test Coverage. If the sensor itself was really failing

while the “sensed” function was basically correctly working, then this would be

a textbook case of a diagnostic-induced false alarm. These will generally the

outcome when any sensor is in diagnostic ambiguity with the function of the

hardware it was supposed to be sensing. As a result, the diagnostic design was

inadequate and thus unable to isolate the hardware function from the faulty

sensor.

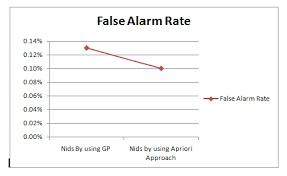

A False Alarm “rate”, like any metric

based upon generating values using a measurement of “rate”, is more suitably considered

using a stochastic means, thus depicting random variables and any vicissitudes

likely to happen over time.

The “Diagnostic” False Alarm rate is a metric that

considers both the diagnostic integrity of the design, and the sustainment

lifecycle by using feigning the eXpress Diagnostic Design of the fielded system

in the STAGE operational support simulation environment.

Although a conventional deliverable of

a Testability or Reliability examination product may give a blushing picture of

a design’s diagnostic, reliability or maintainability shrewdness, the actual

experience in the sustainment lifecycle could be “alarmingly” conflicting. For

instance, designs that are considered as meeting product assessment criteria in

such areas as FD/FI, FA, FSA, MTBF, MTBUM, etc., may lead to disturbing and opposite

outcomes in a diagnostic simulation.

Many findings evolve when diagnostic

restraints are considered in simulation that depiction, for example, the incapability

to isolate between critical failures modes at lower levels of the design, as

discussed above. Given that, there are various intrinsic operational and

diagnostic expectations that are unknowingly overlooked in any design-based

assessment product that fails to consider the diagnostic impact upon the

support of the fielded design over time. The STAGE simulation seamlessly reveals

such limitations, and expectantly early enough in design development to impact

design decisions to augment sustainment effectiveness and value.

When components are being replaced in

any maintenance process, the federation of the design of components may shine

in solving one design goal but may be a major cost stimulant in sustainment.

This generally caused by such corrective actions that resort to replacements of

non-failed components along with “presumed-to-be-failed” components due to lack

of unambiguous isolation means. Diagnostic ambiguity results when the

Diagnostic Integrity of the design (“net” Test Coverage) is not well defined.

In eXpress, the Test Coverage can be exhaustively validated early in design

development or at any time during the product lifecycle(s).

Post Your Ad Here

Comments